Every Android developer has lived this moment: you ship a screen, then product wants a copy change. Or a layout tweak. Or a whole new card type on the home feed. Each change means a new build, a new review, a new release, and then you wait for users to update. Some never do.

Server-driven UI tries to fix this. Send the layout from your backend, render it on the client. Companies like Airbnb and Duolingo have been doing this for years, but every team builds their own thing. JSON schemas, custom DSLs, some unholy WebView hybrid. It works, but it's messy.

Now Google has entered the chat. Remote Compose is a new AndroidX library, still in alpha, that lets you write Compose-like code on a server, serialize it into a binary document, and play it back natively on Android. No JSON mapping. No WebViews. Just bytes in, native UI out.

ELI5

Your app is at version 3.2. You want to change a button color or swap a banner on the home screen. The normal path: code the change, build 3.3, push to the Play Store, wait for review, hope users update.

Remote Compose skips that entire cycle. Your server sends the new layout as a tiny file. The app, still 3.2, renders it natively. No update required.

The 30-second version

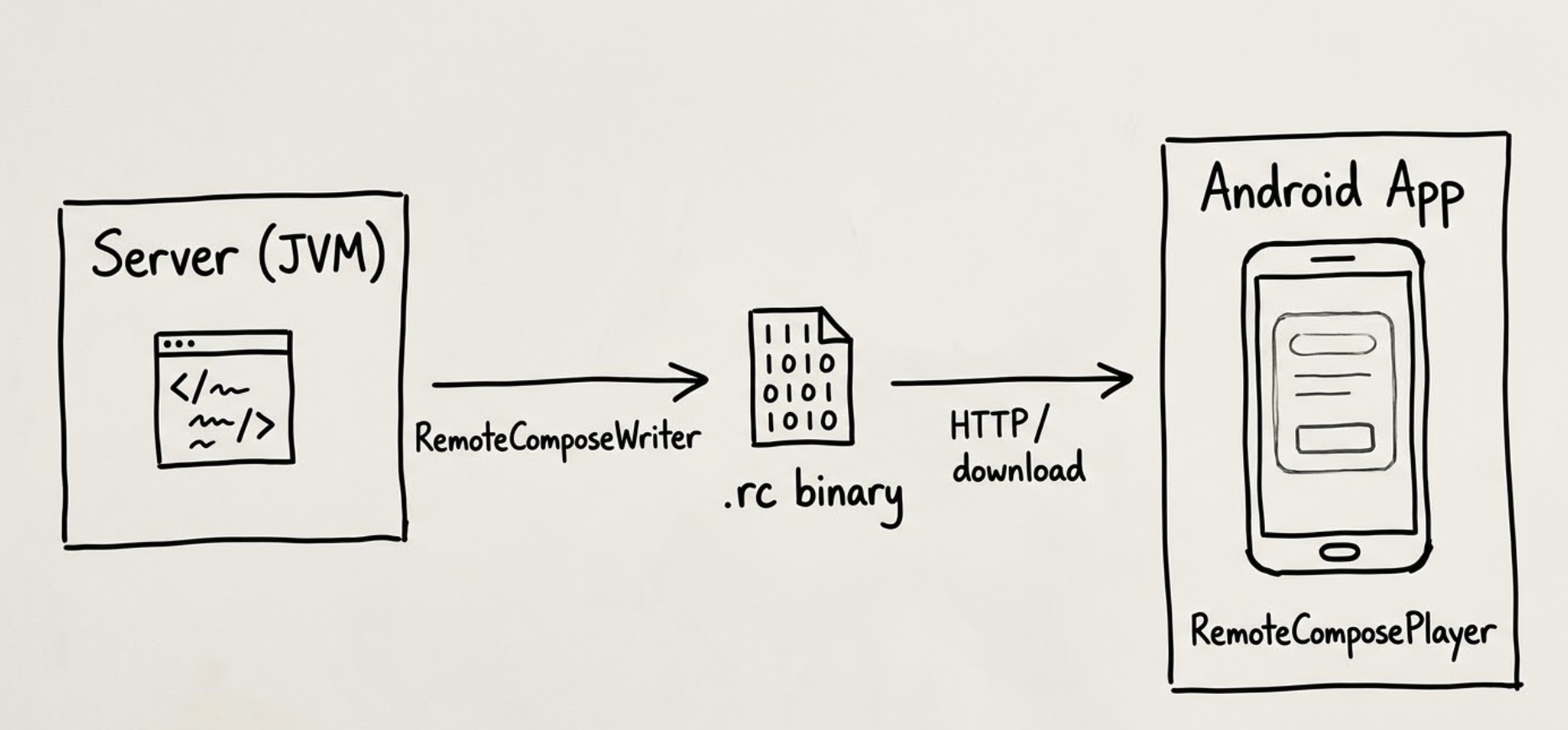

Remote Compose has two sides:

Creation - You write Compose-style code on a plain JVM. A tool called RemoteComposeWriter captures everything you describe (columns, rows, text, images, modifiers, click handlers) and encodes it into a compact binary format.

Playback - Your Android app downloads those bytes and feeds them to RemoteComposePlayer. The player renders everything natively. It doesn't need the original Compose code, your view models, or your business logic. Just the document bytes and the player.

Think of it like a PDF for UI. You author it on one machine. Any compatible reader can display it. Except instead of a static document, this one can scroll, animate, and respond to taps.

Why this matters

If you've worked on a mobile team of any size, you know the release cycle bottleneck. A single copy change can take two to four weeks from decision to delivery. That's why Mercari built their own SDUI system to cut time-to-market, and Nubank built a whole framework from scratch.

But each of these teams had to build their own protocol, schema, and rendering pipeline. That's months of engineering before you render a single remote button. Remote Compose is Google's first-party, standardized answer.

How it actually works

Most SDUI systems work at the component level. Your server sends something like:

{

"type": "button",

"text": "Buy Now",

"color": "#FF5722",

"action": "navigate:/checkout"

}

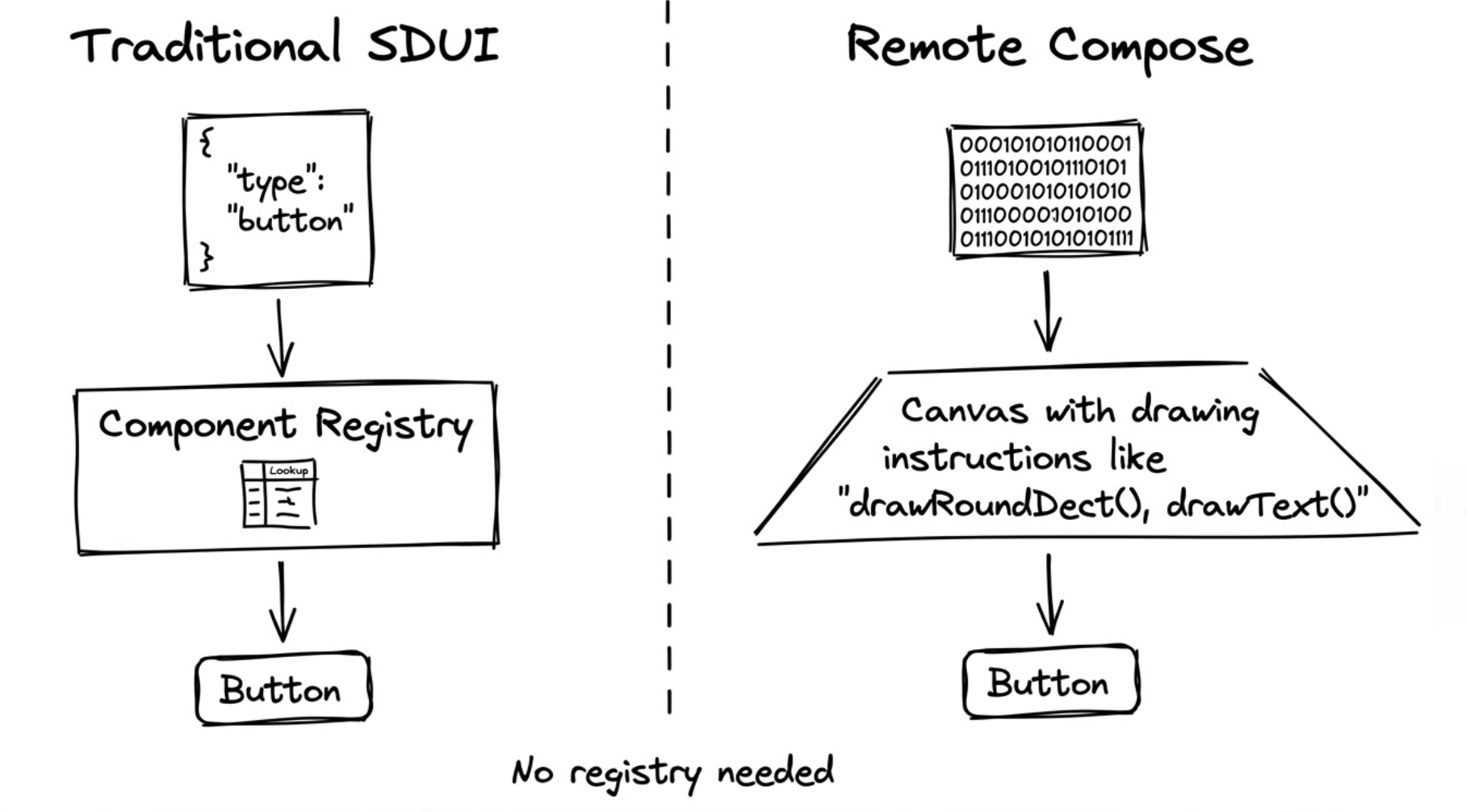

The client receives this JSON, looks up "button" in a component registry, and renders the right Composable. This is how Coinbase and Zalando do it.

Remote Compose operates at a lower level. Instead of sending a component name and properties, it sends drawing instructions. Not "render a button," but "draw a rounded rectangle at these coordinates with this fill, then draw the text 'Buy Now' at this position with this font."

The framework defines 93+ operations covering the full Canvas vocabulary: drawing primitives (DRAW_RECT, DRAW_CIRCLE, DRAW_TEXT), layout containers, modifiers (padding, borders, click areas), state variables, and animations. These operations get packed into a binary format called RemoteComposeBuffer, compact enough to fit on an NFC card (~700 bytes) or a QR code (~2KB).

The creation side

On the server (or any JVM), you write code that looks a lot like regular Compose:

val writer = RemoteComposeWriter()

writer.addColumn(

modifier = Modifier.padding(16.dp)

) {

addText("Hello, Remote Compose", fontSize = 24.sp)

addRow {

addText("Tap me")

addClickArea { actionId = "button_tapped" }

}

}

val bytes: ByteArray = writer.toByteArray()

This runs on a plain JVM. No Android SDK needed. Your server generates the bytes, stores them, and serves them over HTTP.

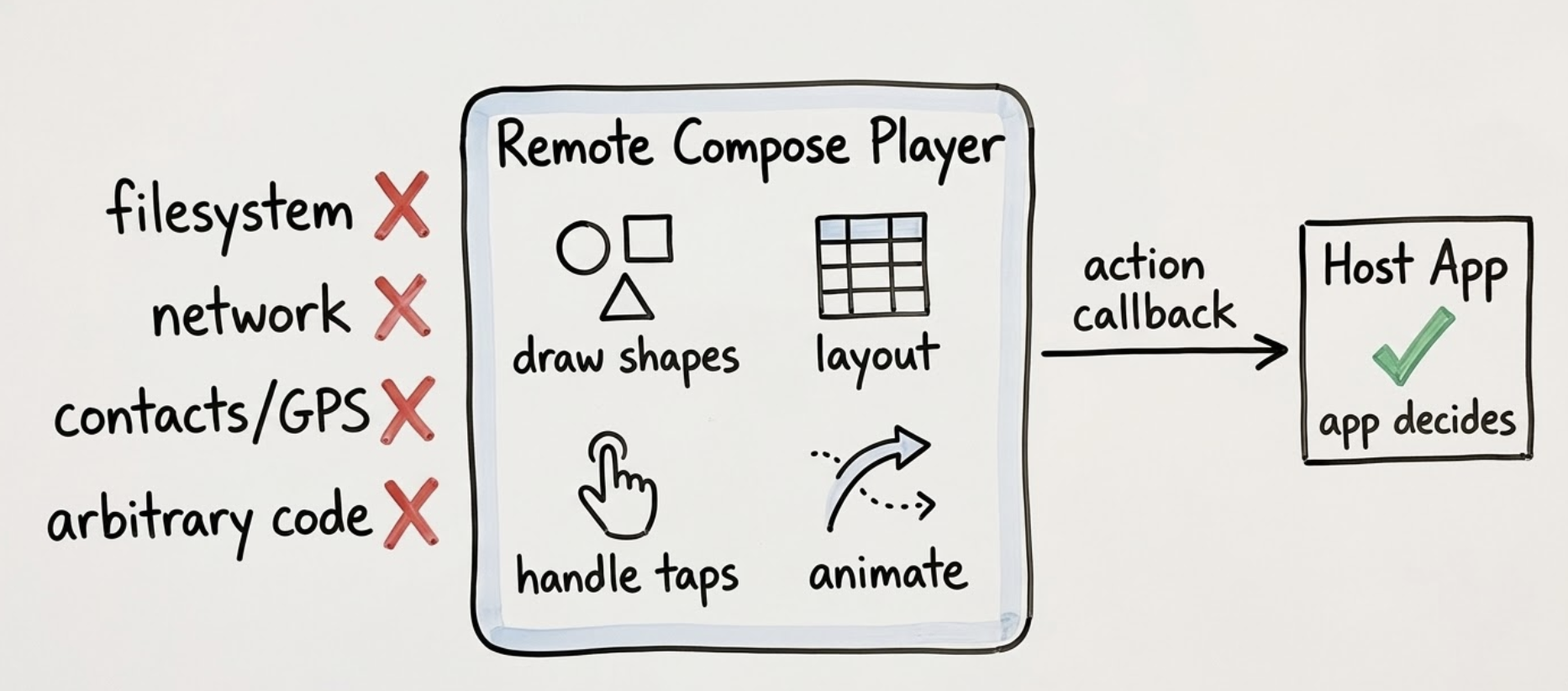

On the Android side, a RemoteComposePlayer takes those bytes and renders them natively. It handles layout, drawing, touch events, scrolling, and animations. Your app passes in the bytes, and responds to action callbacks when the user taps something. There's a Compose-based player and a View-based player for apps that haven't migrated yet.

The action system creates a clean separation: the remote document controls presentation, the host app controls behavior. The document says "the user tapped 'add_to_cart'." The app decides what that means.

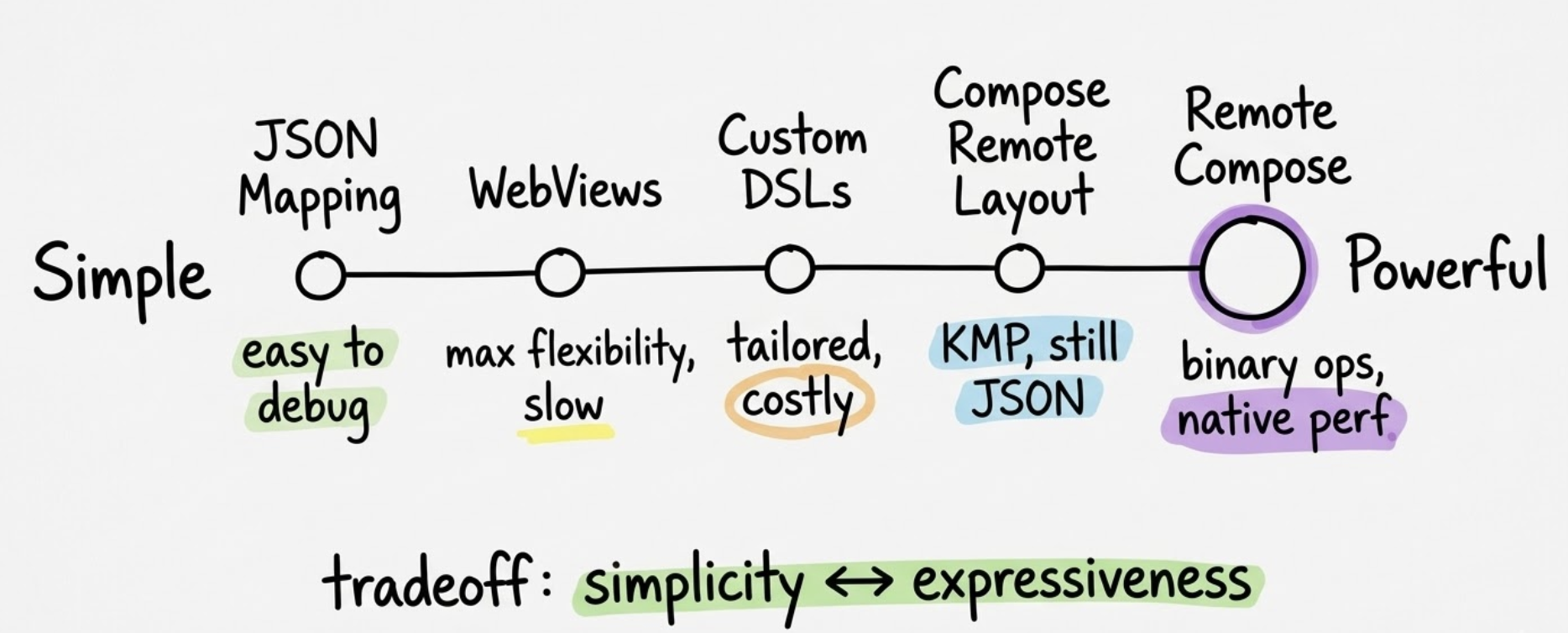

How Remote Compose compares to existing approaches

Not all SDUI is the same. Here are the main approaches teams use today:

- JSON component mapping (used by Carousell, RevenueCat, most in-house systems): The server sends a JSON tree, the client maps component names to native views. Simple to build and evolve, but you can only render components the client already knows about. New component types require a client release.

- WebViews: Load HTML/CSS/JS inside a native frame. Maximum flexibility, but memory-heavy, and the UI never quite feels native. Touch handling gets weird when web gestures conflict with native scrolling.

- Custom DSLs (Airbnb's Ghost Platform is the best-known example): Tailored to your exact needs, but every new feature requires changes on both server and client. New hires have to learn your custom language.

- Remote Compose (Google): Captures drawing operations instead of mapping component names. Any UI you can build in Compose can be captured and replayed. No component registry needed. Forward-compatible, because new designs render on old clients (the operations are low-level primitives). The trade-off: it's alpha software, the binary format is harder to debug than JSON, and there's no iOS player yet.

What you can actually build with it

Android launcher widgets (Android 16+): Remote Compose can power widgets rendered by the system launcher. Widgets have always been limited by RemoteViews, which supports only a handful of view types. Remote Compose removes that constraint.

Server-driven screens: Home feeds, promotional banners, onboarding flows, settings pages. Anything that changes frequently. Serve different UI documents to different user segments for A/B testing without feature flags or conditional code paths.

Ambient computing: The binary format is compact enough to embed in NFC cards (~700 bytes) or QR codes (~2KB). Scan an NFC tag, get a native Android UI. Embedded devices like ESP32 boards could serve Remote Compose documents.

The release timeline

Remote Compose landed in December 2025 and has been moving fast:

| Version | Date | What changed |

|---|---|---|

| alpha01 | Dec 17, 2025 | Initial release |

| alpha02 | Jan 14, 2026 | Min/max font size for text, scrolling fixes, Glance Wear support |

| alpha03 | Jan 28, 2026 | Shape support in borders, combined click actions, RemoteDensity type |

| alpha04 | Feb 11, 2026 | RemoteApplier enabled by default, FlowLayout, RemoteSpacer, minSdk lowered to 23 |

| alpha05 | Feb 25, 2026 | fillParentMaxWidth/Height modifiers, scrolling fixes, RemoteState types |

| alpha06 | Mar 11, 2026 | TextStyle operation, RemotePainter API, RemoteImage/RemoteText/RemoteColor APIs, border/clip modifiers |

Six releases in three months. The API surface is expanding with each release, and the team is clearly pushing toward a stable public API.

The expression system

Most SDUI systems are static: the server sends a layout, the client renders it, done. Remote Compose includes a built-in expression system that changes this. Documents can reference time attributes for animation, bind to device sensors, evaluate math functions (sin, cos, lerp, clamp), toggle visibility with conditional operators, and maintain state through named variables. A remote document can contain a rotating loading spinner, a counter that increments on tap, or a card that expands when clicked, all without client-side code beyond the player itself.

Security and sandboxing

Running server-defined UI on a client raises obvious questions. Remote Compose handles this through strict sandboxing. The binary format has a fixed set of 93+ operations, all explicitly defined. A document can draw shapes, lay out containers, handle taps, and evaluate math expressions. It cannot access the filesystem, make network requests, read device data, or execute arbitrary code.

The player interprets a document the same way a PDF reader interprets a PDF: rendering instructions within a closed environment. A malicious document could try to slow down the player with an enormous number of operations, but it can't escape the sandbox.

Should you use it today?

Probably not in production. It's alpha software. Six releases in three months means the API is actively changing, with renames and behavior changes between versions. There's no iOS player (the team mentioned one "possibly around H2 2026"). Binary debugging is harder than reading JSON payloads. And there's no community ecosystem yet: no libraries, no production case studies, no Stack Overflow answers.

The recommended approach for now is hybrid: build critical screens with local Compose code, use Remote Compose for dynamic content areas like promotional banners and experiment variations. If the remote document fails, the app still works.

But pay attention. Google is investing real resources here. Android 16 widget integration could drive adoption on its own. And the core architecture, capturing drawing operations instead of mapping component names, solves problems that JSON-based SDUI fundamentally can't: custom rendering, animations, forward compatibility without schema synchronization.

Remote Compose is one answer to a question the mobile industry has been asking for a decade: how do you ship UI changes without shipping app updates? Airbnb, Zalando, Coinbase all built custom solutions. What's different here is that Google is building it into AndroidX. If it stabilizes, it becomes the default for Android teams.

Whether that happens depends on the next few months. The alpha needs to reach beta. The API needs to stabilize. An iOS player would change the calculus entirely. For now, read Jaewoong Eum's technical deep dive and Arman Chatikyan's practical walkthrough to get a head start.

Further reading on Mobile Vitals

- Airbnb: A deep dive into Airbnb's server-driven UI system

- Mercari: Supercharging user engagement with SDUI

- Duolingo: How server-driven UI keeps our shop fresh

- RevenueCat: Server-driven UI SDK on Android

- Robinhood: How SDUI is helping frontend engineers scale impact

- Coinbase: Dynamic Presentation

Sources: AndroidX Remote Compose releases, Arman Chatikyan - "Remote Compose: Back to the Future" (Medium, March 2026), Jaewoong Eum - "RemoteCompose: Another Paradigm for SDUI" (Medium, November 2025), SpeakerDeck presentation by camaelon (October 2025).